Athena Steimberg of Odigo discusses some of the realities of chatbots before sharing her advice for making them work in the contact centre.

A majority of companies consider conversational agents a lever for their digital transformation, at least that is what numerous studies claim.

These same studies also agree that the massive adoption of bots is still far from being achieved. And when companies integrate a chatbot – sometimes on their website, often on their Facebook page – it rarely meets their expectations or those of their users.

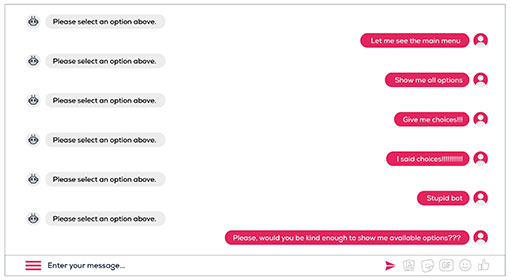

Many chatbots haven’t been able to bluff by the relevance of their answers nor the richness of their conversation. Although always available, these conversational agents are far from systematic at providing immediate and useful answers.

At the risk of disappointing the early adopters of these innovative solutions, the first negative experience can be fatal to the adoption of a new service.

Chatbots: Between Fantasies and Unbearable Promises

Why hasn’t the chatbot always kept its promises? Part of the answer lies in the fantasies often associated with machine learning (ML). Indeed, this idea of automatic learning led some companies to think that the chatbot would be able to learn everything by itself. However, the reality is quite different.

A conversational agent does not think. Chatbots are complex programs that apply man-made decision rules and must be trained to recognize “situations”. They simply apply a conversation scenario that responds to the recognition of intentions – through automatic speech recognition (ASR) or natural language understanding (NLU) – or react according to the user’s choices (through choice buttons or in a carousel, for example).

A conversational agent is defined by its ability to let users express themselves freely and then to respond to requests as naturally as possible and in a relevant manner. Reaching this Holy Grail (responding in relation to the user’s personal problem) requires taking the time to properly train your language recognition engine.

In this respect, the most effective way remains to “feed the bot” with as many sentences representative of how users formulate their requests as possible. This step is crucial to the adoption of the chatbot from its first interactions and remains necessary as the services provided by the bot evolve. These learning phases have often been underestimated or even hidden from companies blinded by the promises of “self-machine-learning”.

It’s not all bleak. A number of successes have been achieved, especially in terms of the personality of the chatbots, which can be distinguished by their way of expressing themselves, their tonality (institutional or lighter), their vocabulary, or their appearance and colour.

Nonetheless, however singular it may be, personality is not enough to compensate for ignorance. And even if significant progress has been made to reach a satisfactory level of understanding for users, without relevant content to offer and personalized answers, the chatbot is close to being an unnecessary gadget.

To overcome the last challenge – to provide a personalized service – the chatbot must be able to access all the company’s knowledge (its information system (IS), customer relationship management (CRM), and knowledge base). But this knowledge still needs to be both structured and accessible. This is an essential step if we want to offer more autonomy and useful self-service to customers on the one hand and relieve human agents of the requests that can be met without them on the other!

But be aware, the complexity and budget associated with integration have sometimes been presented in a watered-down way by some vendors, notably pure players.

However, the chatbot is still far from having said its last word, and we will see why in the second part of this blog post. We will also explain how to create a conversational agent that is more than just a gadget. Finally, we will come back in detail on the different ways to efficiently measure the ROI of a chatbot.

Author: Robyn Coppell

Published On: 15th Jul 2019 - Last modified: 19th Jul 2022

Read more about - Guest Blogs, Odigo