The backbone of a solid customer experience strategy lies within the survey tools in place. The best way of finding out what your customers think is simply by asking them.

This paper is aimed at anyone in customer services with an interest in customer care, especially those who are looking to drive real operational and financial value from a voice of customer programme.

Customer service is more important than ever. Information and reviews are accessible in an instant while social media inflicts high pressure on businesses. If you mess up, there is a risk of that disgruntled customer becoming a detractor and taking their business elsewhere.

It is crucial to find out what your customers think before everybody else does. Today’s wide range of modern surveying tools are not just “nice to have” anymore, they have become actionable and accessible, and truly meaningful down to operational level for agents and team leaders.

Over the last couple of years, NPS, CES and CSAT have moved from buzzwords to integral KPIs, because the customer is finally truly in focus.

Throughout this white paper we will share some of the basics of survey methodology, based on the most common questions we receive from organisations. Including:

- Sample size, how much is enough and how much is too much?

- How do we ensure we’re not too intrusive with our survey approach?

- Survey script, what should we ask our customers?

- It all sounds lovely but how can we prove a return of investment?

Sample size

It is crucial to differentiate between two requirements of customer satisfaction (C-Sat) surveys:

- C-Sat for business intelligence

- C-Sat as an operational tool

Most modern solutions for measuring post contact satisfaction will tick both boxes. By gathering feedback on an individual level, you can drive individual behaviour and coach on results at the same time as you accumulate thousands of surveys on a monthly basis for the business needs.

This situation creates a whole lot of misconception around sampling data, and what the size of the sample is worth. We recommend a minimum of 20 surveys per agent and month, which is a robust amount of data to continuously deliver external 360° feedback. To have this type of customer feedback available directly to the agent is so much more than statistics. In most cases it’s all about the little extra, personal appreciation on a silver plate; a sense of recognition to boost the everyday service.

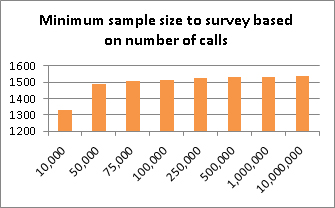

At 20 surveys per agent and month, a small contact centre with 50 seats will generate 1,000 surveys every month – which is a healthy number for sampling feedback. In fact, the graph to the right illustrates how many surveys you should produce to obtain a statistically significant* representation of the average customer’s impression. As you can see, the required number of surveys hardly changes.

* Source: http://www.research-advisors.com/tools/SampleSize.htm based on 95% confidence level and 3% margin of error (common).

To put it simple: in order to get a sample overview of the average impression of your entire operation, you only need to interview around 1,500 customers. However, such information would scarcely be enough to understand it and definitely not enough to drive change. This is why setting a sample size target on individual level makes so much sense. You will receive great support for team leaders and agents alike at the same time as generating lots of data allowing you to investigate the finer details of results! (One thing that grows very well together with larger samples is qualitative information such as verbatim comments.)

From time to time we see overkill in this approach where organisations strive to gather as many expensive surveys as possible, without knowing what to do with them. If you understand what you are doing, and can utilise your findings and tools to drive change, you should not need to generate more than 20 surveys per agent and month.

Most contact centres are either neglecting the need for robust sample sizes on an individual level, or completely overdoing it and still not getting value for the money. If you are actively collecting feedback from customers today, ask yourself what you are doing (and what you should be doing) with all this valuable information.

Intrusive vs non-intrusive survey types

Customer feedback is so incredibly valuable to an organisation that it’s easy to focus on the opportunities and forget about the possible drawbacks. Surveys generate a range of adjectives in everyday talk; intrusive, overwhelming, tiring, annoying and so on.

From a consumer point of view it’s near impossible to avoid being offered a survey for a whole week unless you stop browsing the internet and turn off your telephone. Even then, you should expect a feedback link on your receipt after your weekly shopping or restaurant experience.

Customer satisfaction surveys are so common today that it’s even more important to get it done right. You don’t want to be too intrusive, considering the following examples:

| Channel | Intrusive | Non-intrusive alternatives |

| Web | Huge web survey popup while browsing a website, requiring attention (either you participate or opt out). | Floating tab or widget near the edge of the screen requiring no attention at all (opt in) or a standard link on the website. |

| Multiple email reminders following your contact, or even worse being called up about your email experience. | First of all: skip the reminders, maximum one follow-up email for the survey should be sent to avoid frustration. Even better, including an opt-in link near the email signature for seamless transition. | |

| Phone | Receiving random automated calls with surveys too often is the biggest pitfall here. We recommend a bare minimum of 30 days before calling the same telephone number.

NB: If somebody hangs up or opts out that usually means they don’t want to participate for one reason or another, which is a good reason for not queuing the number for the next hour or day. |

Automated post call IVR surveys should take place as soon after the relevant contact as possible. Turn off any repeat calls or reminder calls.

Text/SMS surveying is also a good non-intrusive alternative following a phone call as they are easy to ignore.

|

When surveying customers for feedback you are asking them for information and usually giving something in return. This something can take many shapes and forms, for example:

- A promise to improve customer services for you and others in the future.

- An opportunity to vent frustration in the case of a negative experience

- Assumption of altruism, that everybody loves helping

Segmentation and questions

As we discussed earlier, sampling 1,500 of your customers would only be enough for checking the pulse. By collating more specific data and better understand where improvements can be made, your survey approach will be more meaningful.

The larger your contact centre, the more likely it is that you have different teams specialising in different queries. There might be one sales department and one service department, both with a range of teams dealing with various queries. It makes sense to reflect these differences in your survey strategy, especially when scripting the questionnaires.

Example of IVR survey tailor-made for a generic service department:

- How satisfied were you with our speed to answer?

- How knowledgeable did you find the advisor?

- How helpful did you find the advisor?

- Overall, how satisfied were you with the call?

- How likely are you to recommend us to a friend or family member? (NPS)

- Was your query resolved in the call? (Yes/No)

- Plus the ability to record a comment.

Similar questions can be created to track the service experience at all levels of customer contact. Consider the interaction be it instore, contact centre, web or at home and create survey questions to befit the interaction. Try and create a line of truth in your question set so that consistencies can be measured.

The key to building a successful post contact survey is to keep it short and simple. Two minutes is a good benchmark for IVR surveys, and no more than 6-7 questions regardless of channel.

Investment

There is no doubt that implementing powerful tools for measuring customer satisfaction at an agent level will have an impact with agent engagement. Though the biggest question remains what is the return on investment?

It has always been difficult to quantify the actual value of improved customer satisfaction. What is an increase of 5 NPS points worth? How much would you pay to improve your satisfaction levels from 75% to 80%? Yes, there is clearly some value attached, but how much?

If you take a look at first call resolution, or FCR, it’s actually possible to make a realistic estimate. Consider a FCR of 70% (70% of all calls were resolved in first contact) for a contact centre handling 1,000,000 calls every year. If we assume the unresolved cases requires another point of contact, that’s 300,000 extra calls. Even a 1% increase in FCR would reduce those extra calls by 10,000, saving your business £40,000.

Depending on the implementation, you may also see a number of benefits such as lower attrition, saving time for team leaders, cutting down on other QA and more. We can guarantee that by enabling your customers to leave feedback, your complaints department will receive fewer calls.

Summary

There is no doubt a good C-Sat solution is worth the investment as long as it’s done properly.

Forecast number of surveys based on surveys per agent (20 recommended), and make sure you don’t upset the customers by delivering intrusive surveys or clunky questionnaires.

When introducing a new customer satisfaction survey in your contact centre, we suggest starting off with a baseline of 500-1,000 calls without telling the agents. As you roll it out, you will be surprised how the numbers jump up purely by agents knowing they might be surveyed. Try it!

For more information or to find out how Bright could add value to your customer insight programmes please visit www.brightindex.co.uk

Author: Rachael Trickey

Published On: 8th Sep 2016 - Last modified: 18th Sep 2019

Read more about - Archived Content, Customer Feedback