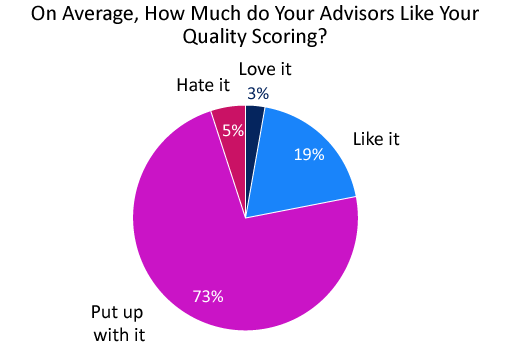

Almost three-quarters of contact centre advisors just “put up with” their quality assurance (QA) programme. So how can you address this?

We share the full results of our surprising research, before presenting a number of tried and tested tips from some of the leading contact centres within the quality space, for how to turn things around.

Most Advisors “Put Up With” Their Quality Programme

In a recent poll of 127 industry professionals, we found that 73% of contact centre teams just “put up with” their QA programme, meaning that gaining buy-in is a common problem.

This poll is based on research that was conducted in our webinar: Proven Ways to Drive up Contact Centre Quality

When advisors fail to buy in to your QA programme, your contact centre will likely fail to gain many of the benefits associated with the key process.

The benefits of having a great quality programme that engages advisors include: positively changing advisor behaviour, gaining a motivational tool and better ensuring compliance.

6 Expert Ways to Improve Advisor Buy-In

With these benefits in mind, we reached out to our readers who told us that their team either “love” or “like” their quality programme for their advice on increasing advisor engagement with quality.

1. Advisor Self-Scoring

Many of the contact centres with great quality programmes told us that advisor self-scoring is an initiative that has worked really well for them.

This can be a great method to engage advisors with the quality scorecard and help them better understand what is expected of them, especially if you compare their results with yours.

However, according to our reader Liam, there are a number of other “obvious” benefits, including:

- Advisors can see how the process works

- Advisors can put themselves in QA perspective

- Advisors can begin to recognize the challenge in assessing certain contacts

Just remember, if agents are self-scoring their calls, make sure that you score the same contact alongside the advisor, to compare scorecards and discuss.

By doing this, you are engaging advisors with the process and guarding against individuals who will always give themselves a score of 100%, without fully concentrating on each contact that they listen to.

2. Timestamp Key Moments for Advisors to Focus On

While it would be great for advisors to go through 20+ calls every week and self-score with a coach or team leader, for many that’s just not realistic with the time that they have for QA.

So these contact centres need to think of how they can really maximize the value of the time that they are spending with the team, and our reader Alex recommends highlighting key parts of contacts to focus on.

This also allows you to build a library of ‘key moments’ to share with other advisors as a coaching tool.

“Rather than having your advisor spending valuable time listening to the whole conversation to score it, get your QA team to timestamp the key parts of the interaction that had the most impact (positive or negative) to help make things a little more efficient,” says Alex.

“This also allows you to build a library of ‘key moments’ to share with other advisors as a coaching tool.”

Again, this is a great way to bring QA and coaching together, but you just need to be wary of being overly critical in your timestamps.

One alternative method is to feedback the scorecard and the recording while noting these key moments. This will allow you to make lots of kind annotations and spread positive messages to advisors, showing your recognition.

I discussed creating a system like this with QA expert Martin Teasdale on a recent episode of The Contact Centre Podcast, which you can listen to below.

The Contact Centre Podcast – Episode 8:

How To Extract More Value From Your Call Center Quality Programme

For more information on this podcast visit Podcast: How to Extract More Value From Your Contact Centre Quality programme

3. Make QA an Extension of Training

Negative perceptions of quality may be due to advisors viewing your programme as a method of trying to catch them out instead of supporting their development.

So, you need to emphasize to advisors that quality monitoring is a process that has been implemented primarily as a coaching method, not as a tool for assessment.

It’s important to create a culture in which quality is an extension of training and not a departmental initiative trying to catch agents in the act of doing something wrong.

“It’s important to create a culture in which quality is an extension of training and not a departmental initiative trying to catch agents in the act of doing something wrong,” says Susan, one of our readers.

“That messaging needs to start in onboarding and continue throughout the life cycle of training and one-to-one meetings etc.”

Learning, after all, does require effort, so encouraging your teams to embrace quality as a coaching tool early and to view it as a worthwhile opportunity is key.

4. Link Back Training to the Expectations of Your QA Framework

To improve advisor performance you should define what “good” looks like, so your team know what is expected of them. But this definition needs to be consistent.

You will likely have defined what is expected from advisors on your quality scorecard and you need to consider whether or not this aligns with your training priorities and the priorities of the wider business.

You will likely have defined what is expected from advisors on your quality scorecard and you need to consider whether or not this aligns with your training priorities…

Any mixed messages will confuse advisors and will harm their perceptions of your quality programme.

You also want to ensure that you are focusing on the areas of most value in terms of driving other business metrics.

“For your quality programme to be effective, it must be accepted by the people you are evaluating,” adds our reader Denis.

“It should certainly reflect service expectations, but it should also be perceived as fair in order for the feedback be accepted, and you should spend the required time to make that acceptability happen before you can use your QA programme effectively.”

Find our advice for creating the ideal quality framework and scorecard in our article: How to Create a Contact Centre Quality Scorecard – With a Template Example

5. Coach, Don’t Consult!

A key theme among those contact centres with great buy-in towards their coaching programme was that coaching should not involve telling advisors what they must do and where to improve, but needs to involve working collaboratively.

So, you need to look at the mechanisms of how you give feedback during quality monitoring and ensure that you have a basic knowledge of some of the key contact centre coaching models.

Providing immediate coaching, and not consulting, through a good quality scorecard is great to finding discrepancies during the one-on-ones…

Providing immediate coaching, and not consulting, through a good quality scorecard is great to finding discrepancies during the one-on-ones, according to Marco, another of our readers.

Marco adds: “Set expectations for improvement and meet again within 30 days to measure that, recognizing the advisor for their improvements and continuing to provide support.”

However, you need to be careful of how you frame your expectations for improvement and that’s where you go back to your coaching models, to ensure your feedback is as constructive as possible.

To find out more about these models, read our article: Contact Centre Coaching Models: Which Is Best for Your Coaching Sessions?

6. Get Advisors Involved in Calibration Sessions

The trouble in scoring contacts in many call centres is that many of the criteria are subjective. When this is the case, an advisor may become disillusioned with the process if they were marked down for something that their colleague wasn’t, even though their contacts were very similar.

If this happens, a feeling that the QA process is biased may start to form amongst the contact centre team, which will harm advisor buy-in.

The trouble is that much of your scorecard will be subjective and it can be very difficult to find ways around this, but what you can do is invite a couple of advisors to calibration sessions, each time you run one.

You can show the team how important quality is to you and how you are working to remove subjectivity, with open discussion and a session leader.

By doing this, you can show the team how important quality is to you and how you are working to remove subjectivity, with open discussion and a session leader, who makes final decisions based on the conversation between analysts.

Mathew, another of our readers, suggests going one step further, saying: “Invite colleagues from business areas that have nothing to do with the contact centre/customer service to join QA calibration sessions.”

“Those of us who work in the contact centre know too much, and colleagues that have nothing to do with contact centre will view the process like customers who have not contacted the business before.”

For advice on how to run the ideal quality calibration session, read our article: How to Calibrate Quality Scores

In Summary

Fewer than 22% of advisors like or love their contact centre’s QA programme, highlighting that many organizations are failing to obtain many of the benefits that come with having a team that is invested in quality.

These benefits include changing advisor behaviours and using scorecards as a motivational tool, which are huge in improving performance and engagement.

So, to fight against these worrying findings and help you seize these benefits, we have presented a number of tried and tested ways to improve advisor buy-in to your quality programme, as recommended by our readers.

For more best practices regarding contact centre QA, read our articles:

- 10 Ways to Improve Call Centre Performance Management

- How to Assess Quality on Email and Live Chat in the Contact Centre

- Call Centre Quality Parameters: Creating the Ideal Scorecard and Metric

The thoughts of our readers were gathered in our webinar: Proven Ways to Drive up Contact Centre Quality

Author: Robyn Coppell

Published On: 2nd Dec 2019 - Last modified: 14th Aug 2025

Read more about - Customer Service Strategy, Employee Engagement, Martin Teasdale, Performance Management, Quality, Scorecards, Service Strategy