Paul Cooper looks at the way that we survey our customers, and draws some alarming conclusions.

Last month, I had two linked experiences of muddled customer service thinking.

Firstly, I was at Stansted Airport, awaiting a flight to Amsterdam to speak at a conference there – on customer service, of course. I had just passed through security and this lady grabbed me and stuck a survey in front of my nose. “Would you be happy to answer these questions?” she said. “No,” I replied, “ But I will”. This threw her for a start. But we moved on.

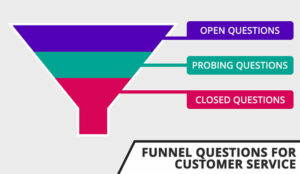

All the questions were about the security process, and as she worked her way through the 9 questions, each scored out of 5 (which is not the best system, but I digress), I gave 4 or 5 to each question. Then she reached question number 10. “How would I rate the overall experience?” I replied 0 or 1.

“I don’t understand,” she said. “How can you rate all the others so high and then this one low?” The reason was simple. All the other questions were hygiene factors – did someone smile, did they ask the right questions, were they polite, etc.? However, despite the fact that I fully understand the need for security checks, the experience was still awful. It took too long, there were constant inconsistencies over such things as removal of shoes or not, belts or not, liquids, etc., and the whole impression given was that no one there seemed to have ever done it at all before that morning. Clearly no one was learning from the customers, or working on improving the process.

When I got to Amsterdam, I checked into the very nice hotel on the outskirts of the city where the conference was being held. I stayed two days, and at the end was asked to fill out one of those cards that pretend to be about customer service.

Again, most of the questions were about hygiene factors – cleanliness of room, comfort of bed, mini-bar stocked correctly, etc. All were diligently marked 9 or 10 – at least they got the scaling right.

However, the last one was about the overall stay – I marked it 1. There wasn’t anyone there to question me this time, but surely someone reading the results would ask me why if they could, unless, as I suspect, it was a form-filling exercise.

Again, they hadn’t thought it through to the customer experience.

Firstly, the location of the hotel, which was pretty expensive, was far too far from the city centre to make travelling into the centre a pleasure – a taxi was the only alternative. Even more annoying, however, was the fact that every time one wanted to go back to one’s room, one had to put the keycard into a slot in the lift. A small thing, you may say, but firstly, one usually had suitcase, briefcase, papers, etc. in the hand so it wasn’t convenient to root around for the stupid card.

Secondly, in the two days, I had to go back to the desk 3 times to get a new one because it had lost its memory (“too close to your mobile phone, sir”, as if that was my fault).

Thirdly, every time someone new got into the lift for a different floor they had to do it as well, and lastly and most importantly, I, as a customer, was actually doing their security job for them. Interestingly enough, I did a straw poll at the conference and virtually every delegate found this annoying and anti-customer service, and said they would tell others.

The issue with so many of these surveys is that the questions are all about what the organisation wants to know, or tell its bosses, and not about really listening to the customer and what we want to tell them, despite the fact that this is totally free research, given at exactly the right moment for maximum benefit.

So, I encourage you all, if you use these sorts of surveys, point these things out to your organisation, and if you get the chance to fill them in, always do so, but with the real truth of your heartfelt feelings.

But, maybe more important than everything else, before you issue these things, ask the staff first – they usually know better, and first, that you’re doing something daft! And how to correct it!

Paul A Cooper is a Director at Customer Plus.

Author: Jo Robinson

Published On: 4th Sep 2013 - Last modified: 28th Oct 2025

Read more about - Customer Service Strategy, Customer Feedback, P Cooper, Service Strategy

I would have thought all surveys should have a comments field on the form. We have this on all surveys and gain huge insight from customers feedback.