Most contact centres measure customer satisfaction (CSat) as a metric. So why is it so important? And what are the benefits of doing so?

We answer both of these questions and investigate how we can obtain more actionable insight from the measure, after a quick recap of how you can calculate CSat.

Measuring Customer Satisfaction

While CSat is a metric that has been calculated for decades within many organizations, contact centres vary greatly in how they do so.

For example, we have known contact centres to calculate CSat by:

- Calculating a percentage score

- Happy, neutral and sad emojis

- Star ratings

- Applying the Net Promoter Score (NPS) method

- Analysing customer conversations

Whichever method we apply, we can use our findings to eliminate pain points, while learning, over time, how to create happier customers. This is the first area where CSat can be very important: benchmarking.

To find out more about each of the above methods for calculating CSat, visit: How to Calculate a Customer Satisfaction Score (CSat)

It’s a Great Measure to Benchmark

Keeping a close eye on customer satisfaction over time is great because it helps us to track how well any changes we have made to our business have been received by our customers.

As Neil Hammerton, the CEO of Natterbox, says: “We now have a wealth of choice in most industries – with traditional players and digital natives competing for market share. If these companies want to survive, they have to prioritize customer satisfaction and offer a premium customer service.”

“If companies don’t do this, customers will be quick to shout about their bad experiences online and ultimately turn to the competition.”

With this in mind, it is good practice to benchmark our CSat performance to assess how customers are responding to our changes, but it can also be applied to setting internal CSat benchmarks.

For example, if we have an overall CSat rating of 80%, we can set a target of reaching 85% in a certain amount of time. Then, we can focus on what we need to do to “move the needle”.

But don’t just focus on failures in trying to improve CSat, assess what we have done that has improved CSat and analyse how we can repeat such a success and create happier customers.

For more on this topic, read our article: Contact Centre Benchmarking – How to Get More From Your Metrics

You Can Explore the Relationship Between CSat and Other Metrics

As well as benchmarking CSat, we can analyse how a 1% rise in CSat impacts other metrics, which can be particularly useful if we want to build a business case. This is because our plans could help to generate or save money in areas that we hadn’t previously considered.

So, one particularly useful metric to assess, in regard to CSat when building a business case, is revenue, as this will help you to provide a projected ROI for your plans.

Mark Ungerman, Director of Product Marketing at NICE inContact, adds: “When CSAT increases, a firm will often experience a corresponding cost decrease in sales and customer service.”

“In addition, by lowering the number of unhappy customers, we can also drive up support costs because they place and escalate more support calls, which consume more time, resources and other concessions.”

One other key metric which can be analysed against CSat, to help build a business case, is customer lifetime value (CLV), because – in theory – when CSat improves, so does the lifetime value of a customer.

After all, a happy customer will be more inclined to make repeat purchases more often and to refer family and friends as new customers.

But exploring CSat’s relationship with other metrics can also be great for other purposes, like targeting an appropriate queue time.

If you can create charts to find the correlation between CSat and queue time, as well as abandon rate and queue time, you can tailor an appropriate service level for your customers – dependent on each channel, of course.

There is also great value in examining the relationship between CSat and quality scores…

Quality Scorecards Should Be Tested Against CSat

While quality scorecards should include criteria that reflect what matters to your business, e.g. compliance, they should be designed primarily to reflect what matters most to your customers.

While we should be weighting each element of the scorecard by assessing its impact on CSat, it is also good practice to measure CSat alongside quality scores to examine whether we are assessing advisors on what customers value most.

To do this, when testing the quality scorecard, we should be assessing contacts from a number of different customers who have left a CSat score after their interaction.

Then, we need to create a graph that considers both CSat and quality scores, before looking for correlation. The better your correlation, the better your quality scorecards reflect what your customers want.

This technique is recommended by Tom Vander Well, the President & CEO of Intelligentics, and is known as “Regression Analysis”.

By building and testing quality scorecards through using CSat in this way, Tom says that we can improve overall performance by:

- Making advisor training more effective (customer focused, data-led)

- Reducing time needed to improve CSat

- Reducing waste as resources can be allocated where they’ll have impact

- Catching systemic issues early, before they become costly

- Creating internal efficiencies (no arguing over what’s important)

Just remember to create separate scorecards for different channels, as what matters most to your customers, and maybe to your business, will vary depending on the channel you are focusing on.

For more advice from Tom on building the best scorecard, read our article: Call Centre Quality Parameters: Creating the Ideal Scorecard and Metric

Splitting CSat Between Channels and Contact Reasons Is a Good Exercise

On the topic of analysing CSat scores at a closer level, we can also split the metric across different channels and contact reasons to gain even more insights.

Martin Jukes, the Managing Director of Mpathy Plus, says: “When organizations measure customer satisfaction, it’s ordinarily about the overall service instead of how they use that channel.”

“But we want to find out where the sources of satisfaction and dissatisfaction come from, so we can improve. So I think that understanding which channel the customer was using and categorizing that is really important.”

As Martin alludes to, the key to measuring CSat is to ensure that we are obtaining some actionable insight. While calculating an overall CSat score can be a nice “pat on the back” measure, it is a waste of time to measure something unless we can gain something from it.

But if we compare CSat across different channels, we can look into why some have higher scores than others and highlight key areas in which we need to improve.

The same goes for key contact reasons, as we can really notice the difference in satisfaction when we make changes to the processes and systems that address that contact reason.

In fact, if we wish to take this one step further, we can assess satisfaction across the entire customer journey for each contact reason…

We Can Assess How CSat Varies Along the Customer Journey

While we may be the only ones who measure CSat, creating customer satisfaction should be the responsibility of the entire organization, not just the contact centre.

This is why many organizations have adopted a NPS (Net Promoter Score) Champions scheme, and there is no reason why we can’t do this for CSat.

The scheme would work by measuring CSat to find pain points along the customer journey. When a “pain point” is found, we tell the CSat champion in the relevant department. It would then be their responsibility to implement a process to remove the pain point.

Of course this requires buy-in from each department within the organization, and we would also have to change the way we measure CSat.

We can do this through adding a follow-up question when a customer gives us a low-satisfaction score. This question could be something like: “In which areas could we improve as an organization?”

Be sure to only do this to customers who have left a negative CSat score, though – we don’t want happy customers to start thinking about our flaws!

To find out these pain points without asking the customer directly, Colin Hay, Vice President of Puzzel, suggests that we use speech analytics, instead of traditional CSat surveys.

Colin says: “Use analytics to monitor all interactions as they happen and provide the hard evidence to make meaningful changes to service delivery.”

“It’s time to make CSat a key part of your CX programme. Just be sure to make it a regular form of continuous improvement by acting on feedback, good and bad, to derive the greatest benefits.”

CSat Scores Can Indicate How You Should Treat Individual Customers

To measure CSat, we are generally questioning individual customers and their responses are not just valuable for wider CSat calculations, they give us insight into what we need to do to increase their engagement with us.

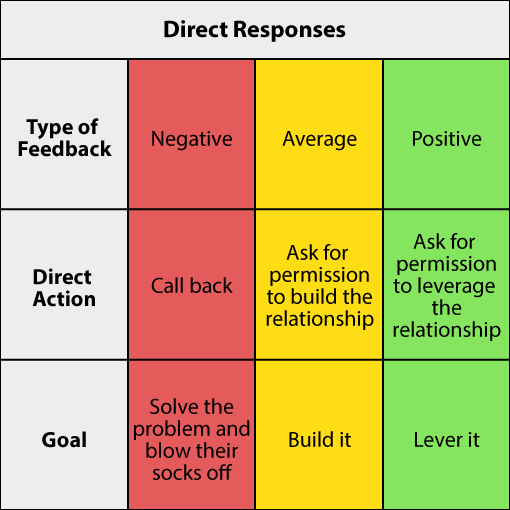

A proactive organization will gather CSat scores and group customers into three categories: negative, neutral or positive.

How we do this will depend on which scale we use to measure it, whether it’s through happy, neutral and unhappy emojis or through a numbered scale.

Either way, if we split individuals into these groups, we can create a strategy for how we communicate with them, market to them and route their future contacts.

We can even go as far as setting up a system to systematically respond to customers who have left a CSat score, in order to increase their engagement and potentially their value.

For such a system to work, we could directly follow up with customers, like how Guy Arnold, Founder of Slow Selling, suggests in the article “How to Get the Silent Majority to Respond to a Customer Survey“. This is presented in the table below:

Brent Bischoff, Cloud and Data Services Consultant at Business Systems, likes the idea of having such a system in place to help retain customers.

Brent says: “It’s crucial to keep an eye on the quality of customer service you provide. In fact, research says that it is 6-7 times more expensive to acquire a new customer than it is to keep a current one. So don’t take loyalty for granted!”

In Summary

While CSat is often just calculated because it’s traditional in the contact centre, there is a lot of value in doing so – as long as it is measured in the right way.

By this we mean splitting it across different channels and contact reasons, as well as benchmarking it over time. This will give us some really actionable insight.

Then, we need to think about CSat’s relationship with other metrics, such as revenue, queue time and quality scores, just to name a few. By doing so, we can improve our business cases, operating efficiencies and our scorecards.

Finally, just think about CSat on an individual score-by-score basis. These scores give us insight into how our individual customers are feeling, which is something that is good to know when we are providing great customer service.

For more on the topic of customer satisfaction, read our articles:

- How to Get More From Your Customer Satisfaction (CSat) Scores

- 9 Strategies to Improve Customer Satisfaction

- 21 Practical Techniques to Boost Customer Satisfaction in Six Weeks

Author: Charlie Mitchell

Reviewed by: Robyn Coppell

Published On: 14th Aug 2019 - Last modified: 21st Jan 2026

Read more about - Call Centre Management, Brent Bischoff, Business Systems, Charlie Mitchell, Colin Hay, Customer Satisfaction (CSAT), Editor's Picks, Key Performance Indicators (KPIs), Management Strategies, Mark Ungerman, Martin Jukes, Metrics, Natterbox, NiCE CXone, Puzzel, Tom Vander Well